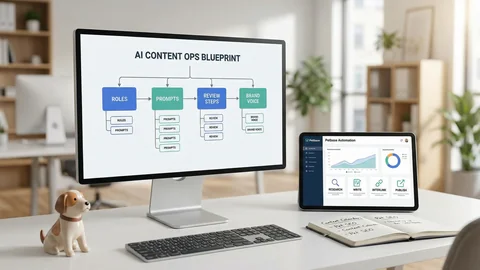

AI-Assisted Pet Content: Safe Workflows, Human QA, and Publishing Automation

Table of Contents +

- Why AI-Assisted Pet Content Needs Safety, Accuracy, and Voice Control

- Workflow Overview: From Research to Publish With Guardrails

- Research & Briefing: Data-Backed Topics and Source Lists

- Prompting the Draft: Structures, Guardrails, and Brand Voice

- Human QA Checklist: Fact-Checking, Medical Disclaimers, and Compliance

- Publishing Automation: From CMS Draft to Live With Confidence

- Monitoring & Continuous Improvement: Feedback Loops for Safer Scale

- How Petbase Automates Planning, Writing, Linking, and Publishing

- Frequently Asked Questions

- Conclusion

- References

Learn safe AI workflows for pet content with human QA and automated publishing. See prompts, sourcing, fact-checking, and how Petbase scales planning to live po

Scaling high-quality pet content is achievable when AI, human review, and automation work in concert. The challenge is doing it safely-without losing accuracy or brand voice. This guide presents practical, repeatable workflows you can use immediately.

We focus on guardrails for research, drafting, QA, and publishing. You will see how to brief AI, verify medical and product claims, enforce style, and automate internal linking and schema-so your content ships fast and stays trustworthy.

Why AI-Assisted Pet Content Needs Safety, Accuracy, and Voice Control

Pet audiences expect reliable guidance and a consistent brand experience. That requires structure: sourced briefs, constrained prompts, and expert review before anything goes live.

Risk landscape: medical nuance, breed specifics, and compliance

Pet content often touches health, nutrition, behavior, and product use. Risks include misinterpreted symptoms, formulation specifics, dosing variability, and contraindications across breeds and life stages. Add regulatory guidance and brand claims, and precision becomes non-negotiable.

How AI plus human QA aligns with E-E-A-T for pet topics

AI can surface patterns and accelerate drafting, but expertise and oversight maintain reliability. Research on algorithmic systems highlights governance challenges and the need for clear human responsibility, which mirrors E-E-A-T expectations in sensitive domains[2].

Positioning within your broader program: see the pet content writing guide

Operationalize these workflows within your full editorial and SEO program by aligning topics, cadence, and governance. For structure across teams and channels, refer to the pet content writing guide that underpins this approach.

Petbase handles this entire content workflow automatically - 10 SEO articles published to your blog every month - start your free trial.

Workflow Overview: From Research to Publish With Guardrails

Use a structured pipeline that separates ideation, drafting, review, and release. Each stage should add evidence, reduce risk, and prepare content for scale.

Discovery and brief creation with source requirements

Collect queries by audience segment, analyze SERPs, and draft a brief with intent, entities, risks, and required sources. Briefs become the contract for accuracy and tone, anchoring pet SEO workflows in data.

Drafting with structured prompts and content policies

Constrain models with role, scope, and non-negotiables. Require inline citations for claims and flags where evidence is limited. This reduces rework and keeps AI content for pet brands aligned with compliance.

Human QA, fact-checks, and final sign-off

Expert reviewers verify claims, disclaimers, and brand voice. Evidence shows automation can improve organizational adherence to guidelines, but human judgment is essential in nuanced cases[3].

Automated internal linking and on-page SEO before publish

Apply programmatic meta, schema, media hygiene, and internal links using rules. Automating checks like alt text, anchor variety, and link targets keeps standards consistent at scale.

Principle: Automate what is repeatable; review what is risky.

Research & Briefing: Data-Backed Topics and Source Lists

Start with evidence. Distinguish audience intents, define source tiers, and capture risks and claims in a structured brief your team and tools can follow.

Query mining and SERP patterns for pet owners vs. professionals

Map consumer queries (symptoms, product comparisons, how-to) separately from professional searches (protocols, ingredient specs). Note result types-guides, videos, calculators-and content gaps. This informs pet content automation with grounded opportunities.

Source-quality tiers: veterinary associations, journals, brand SOPs

Prioritize authoritative, current sources and brand documentation for product specifics. Keep a vetted library that briefs can reference directly.

| Tier | Examples |

|---|---|

| Tier 1 | Veterinary associations, government health portals, consensus guidelines |

| Tier 2 | Peer-reviewed journals, academic institutions, textbooks |

| Tier 3 | Manufacturer datasheets, brand SOPs, expert interviews |

Brief template fields: intent, entities, risks, claims, citations

Include search intent, target entities, life-stage modifiers, risk notes, mandatory claims with citations, tone parameters, and internal link targets. This improves fact-checking AI content and accelerates accurate drafting.

Prompting the Draft: Structures, Guardrails, and Brand Voice

Prompts should constrain scope, enforce evidence, and align tone. Structured frameworks minimize hallucinations and speed reviews.

Role + constraints prompting to reduce hallucinations

Frame the model as a “pet health copywriter” with rules: cite every claim, avoid diagnostic advice, include disclaimers, and use brand style. Provide negative examples to block risky phrasing and overconfident language.

Evidence-first outputs with inline citations and claim flags

Require numbered citations adjacent to claims, with a “needs source” flag when evidence is inconclusive. This channelizes human-in-the-loop QA to consequential statements, accelerating accuracy without diluting brand voice.

Linking to structure using ready-to-use pet blog templates

Use heading frameworks that match search intent. When moving from prompts to layout, adopt ready-to-use pet blog templates so introductions, FAQs, and comparison tables render consistently across categories.

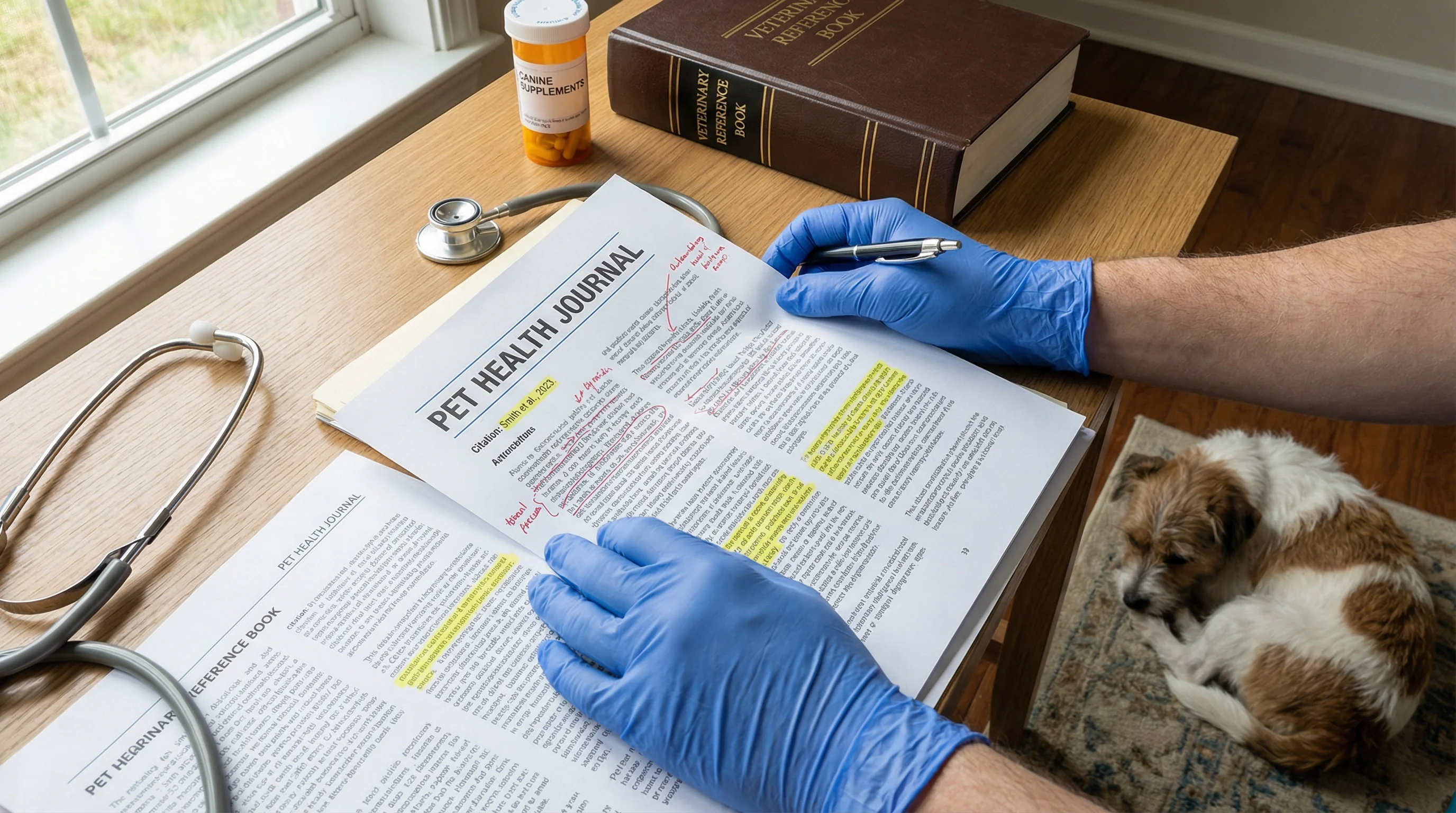

Human QA Checklist: Fact-Checking, Medical Disclaimers, and Compliance

QA combines scientific validation, editorial judgment, and governance. Tie each review step to a specific risk that could impact readers or brand trust.

Claim-by-claim verification and citation validation

Review every factual statement against sources. Confirm recency, author credibility, and primary evidence. Professional moderators emphasize that gray areas require nuanced judgment supported by tools, not replaced by them[4].

Breed, life-stage, and contraindication accuracy review

Check that recommendations address breed-specific considerations, life-stage needs, and contraindications. If evidence is mixed, present ranges and trade-offs rather than absolutes, and mark sections for periodic review.

Medical disclaimers, safety notes, and scope-of-practice limits

Add clear disclaimers, emergency guidance boundaries, and usage precautions. Align safety notes with house policy and regulatory language. Never present diagnosis; instead, advise consulting qualified professionals.

Governance: version control and how to track AI content performance

Use changelogs, reviewer sign-off, and periodic audits. To benchmark output and iterate, establish dashboards to track AI content performance by accuracy, engagement, and conversions.

Publishing Automation: From CMS Draft to Live With Confidence

Automation ensures every page ships with technical SEO and internal linking discipline. It reduces human error and accelerates coverage without compromising quality.

Programmatic meta, schema, and media hygiene

Generate meta titles, descriptions, FAQs, and medical schema programmatically based on entities. Enforce media rules: descriptive alt text, compression, and format standards. Validate with pre-publish checklists to prevent regressions.

Internal linking rules to products, categories, and education

Drive discovery with rules that link educational articles to relevant product categories and evergreen hubs. For a framework that connects articles to commerce, see product-led pet content and apply it consistently.

Scheduling, localization, and multilingual variants

Batch-schedule by theme to build topical authority. Prepare locale variants with date, measurement, and terminology adjustments. Use controlled glossaries so regional differences never break consistency.

Monitoring & Continuous Improvement: Feedback Loops for Safer Scale

After publishing, measure outcomes, stress-test prompts, and refresh content. Treat safety and performance as ongoing responsibilities, not one-off tasks.

Content scoring: quality, accuracy, and outcome metrics

Score pages on factual accuracy, clarity, and policy adherence, alongside rankings, CTR, and conversions. Automated evaluation can reinforce standards, but define human override paths for nuanced cases[3].

Red-team prompts and regression tests for AI updates

Regularly test prompts with adversarial cases: ambiguous symptoms, overlapping ingredients, and edge conditions. Algorithmic governance literature emphasizes building processes to manage model changes and their impacts[2].

Refreshing, re-linking, and rolling back changes

Maintain “contestability” by enabling quick updates, annotated corrections, and rollbacks where necessary-an approach shown to improve accountable decision-making in complex systems[1].

How Petbase Automates Planning, Writing, Linking, and Publishing

Petbase operationalizes these workflows so teams can scale safely. Automation handles the repeatable tasks; humans validate the critical ones.

Topic intelligence and 30-day plans for pet categories

Petbase identifies demand patterns, clusters entities, and assembles a 30-day calendar aligned to gaps and seasonality, then generates briefs with mandatory sources, claims, and internal link targets.

Brand-voice tuning with pet-specific language models

Brand style guides are embedded into prompts, with tone checks, lexicon constraints, and disallowed phrasing rules. This keeps AI content for pet brands consistent across authors and time.

Product-aware internal linking and schema at scale

Petbase implements product-aware rules that add structured data, link to relevant categories, and enforce media and FAQ hygiene. For teams that want product-linked articles generated on schedule, consider Start Now for operational simplicity.

Frequently Asked Questions

How can I ensure AI-generated pet content is medically accurate?

Use a sourced brief, require citations for any health or ingredient claims, and run human QA by a knowledgeable reviewer. Add medical disclaimers and verify breed and life-stage nuances.

What does a safe AI content workflow look like for pet brands?

Start with research and a detailed brief, generate a draft with constrained prompts, perform human fact-checking, add schema and internal links, and automate publishing with audit logs.

How do I keep brand voice consistent across AI-written articles?

Create a style guide with tone, terminology, and do/don’t lists, then embed it into prompts and templates. Validate with sample paragraphs and reviewer checklists before publishing.

Which sources are best for citing pet health information?

Prioritize veterinary associations, peer-reviewed journals, government resources, and manufacturer datasheets for product specifics. Avoid unsourced forums or promotional claims.

How does publishing automation affect SEO for pet content?

Automation standardizes meta, schema, internal links, and schedules, reducing errors and improving coverage. Combined with QA, it supports consistent topical authority growth.

Conclusion

Operational excellence in AI-assisted pet content comes from disciplined workflows: data-backed briefs, constrained prompts, human-in-the-loop QA, and publishing automation. This structure reduces risk, preserves voice, and compounds SEO impact. By combining robust sourcing, claim-level verification, and programmatic on-page optimization, teams can scale responsibly. Adopt these guardrails, measure outcomes, and iterate deliberately-so every article is accurate, on-brand, and ready to perform.

References

- K Vaccaro et al. (2021). Contestability for content moderation. Proceedings of the ACM on …. View article

- R Gorwa et al. (2020). Algorithmic content moderation: Technical and political challenges in the automation of platform governance. Big Data & Society. View article

- M Horta Ribeiro et al. (2023). Automated content moderation increases adherence to community guidelines. … of the ACM web conference 2023. View article

- A Wiesner et al. (2025). Navigating the gray areas of content moderation: Professional moderators' perspectives on uncivil user comments and the role of (AI-based) technological tools. new media & society. View article